Australia Introduces Landmark Social Media Ban for Teens Under 16

Starting 10 December, Australia will implement a pioneering ban on social media use for teenagers aged 16 and under. This legislation has prompted major tech companies, including TikTok and Meta, to urgently devise methods to comply with the new law, which carries hefty fines for non-compliance.

Legislative Goals and Requirements

The primary aim of this law is to safeguard young Australians from the myriad pressures and dangers associated with social media. Australia’s eSafety Commissioner has highlighted concerns over design features that prompt increased screen time and expose minors to harmful content that can affect their mental health.

The new regulations are especially pertinent, considering that a 2021 census revealed that approximately 2.5 million young Australians aged 8 to 15 are active on various social media platforms. Notably, the government estimates that around 86% of this age group engages with social media.

Platforms Affected by the Ban

The legislation encompasses several major platforms, identified by the eSafety Commissioner, which includes Facebook, Instagram, Snapchat, TikTok, and YouTube, among others. The criteria for age restriction will apply broadly to social media services that:

- Facilitate online interactions between users.

- Allow users to link with or communicate with others.

- Enable users to post content on the service.

Companies must independently evaluate whether they meet these conditions and undertake legal assessments to ensure compliance.

Platforms Not Subject to the New Restrictions

On the other hand, certain platforms such as Discord, Roblox, and WhatsApp will not be subject to age restrictions. These decisions, made on 21 November, could change as the government considers other platforms for future inclusion.

Potential Consequences for Non-Compliance

Under the Online Safety Amendment Act 2024, social media companies are required to take “reasonable steps” to prevent underage registration and usage, with fines reaching up to £25 million for failures. The responsibility of verifying age lies with the platforms themselves, which must find methods beyond simply asking for identification.

Meta announced it would adhere to the new regulations but expressed concerns regarding the effectiveness of current age-assurance technologies. It also indicated that some accounts of users who are over 16 may unintentionally get suspended during this transition.

Reactions and Criticism of the Ban

The announcement has sparked a range of opinions from stakeholders. Various companies, including Google and YouTube, have voiced their dissatisfaction with the legislation. Critics argue that users will lose parental controls and supervision over their children’s activity, especially on platforms like YouTube.

The legislation has also faced scrutiny for not including popular applications like Lemon8, prompting concern that children could migrate to unregulated platforms. Australia’s Communications Minister, Anika Wells, addressed these critiques, asserting that the government is poised to evaluate the situation continually.

Implications for the UK and Future Legislation

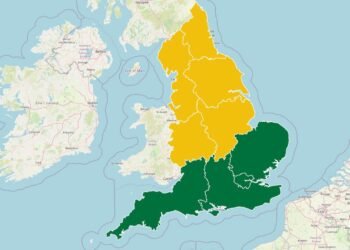

While Australia leads this bold move in regulating social media for minors, the United Kingdom is also exploring similar initiatives. The UK’s Online Safety Act, introduced in July, aims to modify how children interact with the internet, imposing restrictions on access to harmful content for users under 18.

The global discussion on youth safety in digital spaces continues to evolve as countries consider the implications of social media exposure on mental health and welfare.

Conclusion

As Australia prepares for this unprecedented legislation, its outcomes could have far-reaching effects on social media regulations worldwide, including potential reforms in the UK and beyond aimed at protecting the mental well-being of young internet users.

Source: Original Article