Search engines play a critical role in navigating the vast expanse of the Internet, allowing users to efficiently locate and access information. Central to this function is the process of discovery and indexing, primarily carried out by automated programs known as crawlers or spiders. This article explores the fundamental role of crawlers in the web ecosystem and how they facilitate the retrieval of web content.

What are Crawlers?

Crawlers are automated software applications designed to browse the Internet and gather information from websites. They systematically traverse the web, following links from one page to another, and compile data about the content they encounter. These crawlers are integral to the functioning of search engines, enabling them to index vast amounts of information swiftly and accurately.

How Crawlers Work

The process by which crawlers discover and index web content involves several key steps:

- Starting Point: Crawlers begin with a list of URLs, known as seeds, which serve as initial sites to explore.

- Fetching: The crawler accesses the web page at each URL to retrieve its content. This process is often automated using HTTP (Hypertext Transfer Protocol).

- Parsing: After obtaining the content, the crawler analyzes the HTML structure of the page to extract relevant data, such as text, images, and metadata.

- Link Following: While parsing, crawlers identify hyperlinks that connect to other web pages. They queue these new URLs to fetch in subsequent cycles.

- Storing Data: The information gathered is stored in a database, allowing it to be indexed by the search engine for quick retrieval during user queries.

Importance of Indexing

Once the data is fetched and parsed, indexing is the next critical step. The search engine organizes the data and creates an index that allows it to retrieve information efficiently when a user enters a query. Indexing involves the following processes:

- Organizing Content: The data is categorized based on various parameters, including keywords, relevance, and content type.

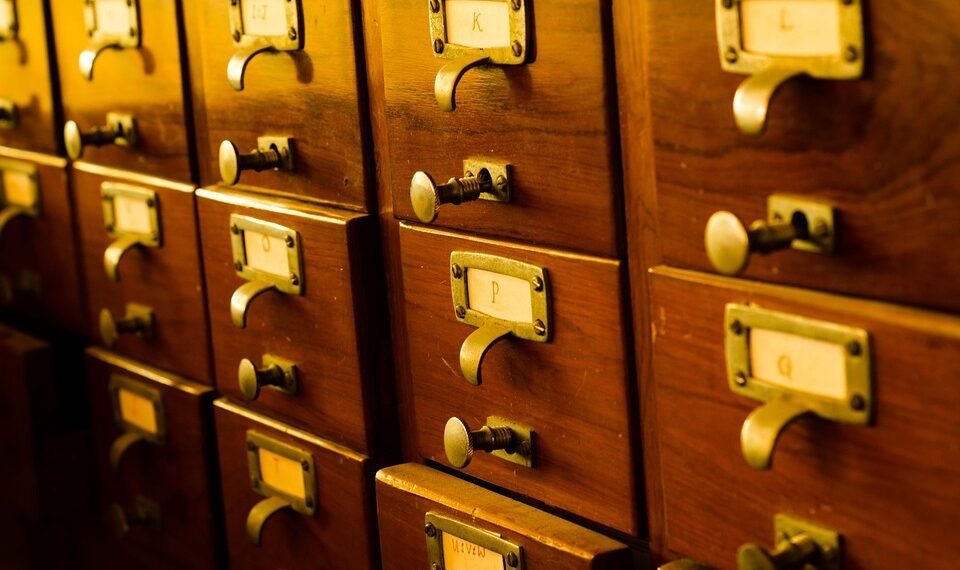

- Creating an Index: An index is akin to a library catalog, enabling the search engine to quickly locate relevant documents based on search queries.

- Ranking Pages: Along with indexing, search engines also evaluate content quality and relevance to rank pages, ensuring that the most pertinent results are displayed to users.

Challenges Faced by Crawlers

Crawlers encounter several challenges when indexing web content:

- Dynamic Content: Websites that utilize JavaScript or AJAX to load content can be difficult for crawlers to index, as they may not recognize dynamically generated content.

- Robots.txt: Websites can restrict crawler access using a

robots.txtfile, which defines rules for what content can be crawled or indexed. - Duplicate Content: Crawlers must also manage duplicate content, which may arise from various URL structures pointing to the same resource, complicating the indexing process.

Conclusion

Crawlers represent an essential component of modern search engines, allowing them to explore and index the ever-expanding content of the web. Understanding how crawlers function helps demystify the processes behind search engines and emphasizes the importance of structured, accessible web content. As the web continues to evolve, the role of crawlers will remain vital in facilitating information discovery and accessibility.